I had the pleasure to attend PyCon JP 2015, held in Heisei Plaza in Tokyo on October 9-10, featuring the “Possibilities of Python”. Although I am not really into Python (busy with Clojure & robots!), I thought is would be a good way to meet programming enthusiasts in Tokyo. And it was.

My first impression about the Python community is its diversity. Beyond gender diversity, I felt Pythonistas (seems to be how to call someone who writes Python) were coming from so many different areas: I met people in the devops world (of course), but also researchers, roboticists, data scientists, linguists, game developers, startup owners, photographers and hackers of all sorts. Great community!

Now let me pick up a few talks/sessions which resonated with me.

My first (and favourite) talk is by Hideyuki Takei (@HideyukiTakei) on how to make a 4-leg robot learn to move on its own using reinforcement learning in Python. His idea is simple, but really great: let the robot “try out” different possible actuator settings, and based on a reward (basically, the total travelled distance), learn the “good” patterns (i.e. the ones that will make the robot move forward). I thought this was wonderful. He is using Gazebo robot simulator for learning (to avoid making his robot actually move so many times), and showed off his robot walking. It was impressive to see a real motion pattern emerge after only 400 iterations!

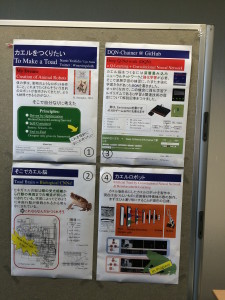

My next pick is actually a poster session by Ugo-Nama (@movingsloth) who presented his attempt to create a toad robot, particularly focusing on modelling of the perception of the world by the toad. He is using (again) Q-learning and CNN (convolutional neural networks) to analyse the visual input of the toad and deduce whether the toad sees a “prey” or a “predator”. This information can then be transformed them into actionable goals, such as “jump”, “run”, etc. His work is based on the research of J.P. Ewert who studied neurophysiological foundations of behavior.

I also liked the talk of Tomoko Uchida (@moco_beta) on natural language processing, and in particular Morphological Analysis using Python. This was appealing to me, because the problem of lexical parsing turns out to be more difficult in Japanese than in Western languages for example, where the separation between words is clear. She explained how she built janome, a morphological analysis library written in Python. I tried it out, and yes, it parses the following sentence correctly!

>>> from janome.tokenizer import Tokenizer

>>> t = Tokenizer()

>>> for token in t.tokenize(u'すもももももももものうち'):

... print(token)

...

すもも 名詞,一般,*,*,*,*,すもも,スモモ,スモモ

も 助詞,係助詞,*,*,*,*,も,モ,モ

もも 名詞,一般,*,*,*,*,もも,モモ,モモ

も 助詞,係助詞,*,*,*,*,も,モ,モ

もも 名詞,一般,*,*,*,*,もも,モモ,モモ

の 助詞,連体化,*,*,*,*,の,ノ,ノ

うち 名詞,非自立,副詞可能,*,*,*,うち,ウチ,ウチ

>>> ^D

I also attended many other talks / poster sessions, including “How to participate to programming contests and become a strong programmer” by Chica Matsueda, “Building a Scalable Python gRPC Service using Kubernetes” by Ian Lewis, “Finding related phrases on large amount of tweets in real time using Tornado/Elasticsearch” by Satoru Kadowaki, “Python and the Semantic Web: Building a Linked Data Fragment Server with Asynco and Redis” by Jeremy Nelson, “Let it crash: what Python can learn from Erlang” by Benoit Chesneau, a panel session on diversity, “How to develop an FPGA system easily using PyCoRAM” by Shinya Takamaeda, “Sekai no Kao” (the Face of the World) by Hideki Tanaka et al., and “Rise of the Static Generator” by Justin Mayer.

Lots of great content, but especially great people. Many thanks to the organisers for such a great conference. I’m hoping to attend PyCon 2016… and maybe submit a talk?